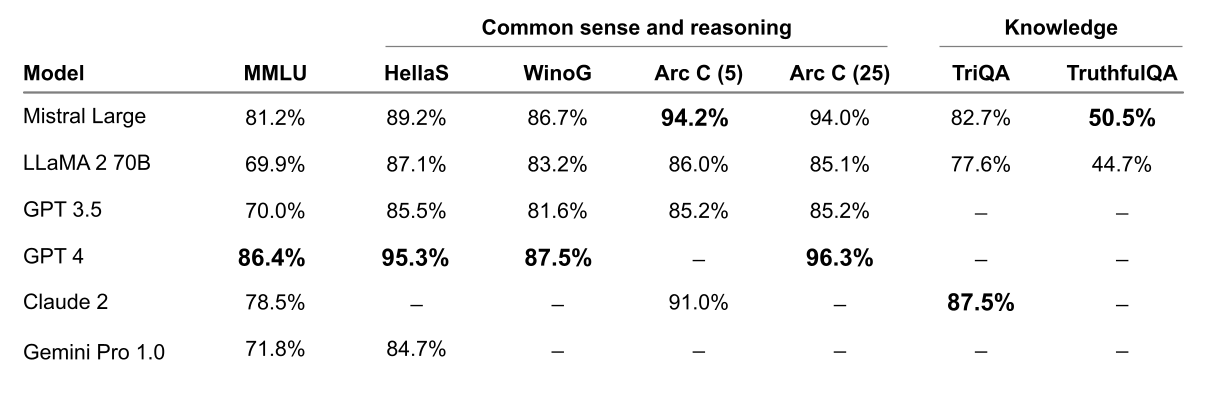

Figure 1: Comparison of GPT-4, Mistral Large (pre-trained), Claude 2, Gemini Pro 1.0, GPT 3.5 and LLaMA 2 70B on MMLU (Measuring massive multitask language understanding).

(IN BRIEF) Mistral AI, an AI company, has introduced Mistral Large, its latest advanced language model, offering top-tier reasoning capabilities and multilingual proficiency. It’s now accessible through Azure, in addition to their platform. They’ve also launched Mistral Small, optimized for low latency tasks. Both models support JSON format and function calling. This move aims to democratize AI by making cutting-edge models readily available and user-friendly.

(PRESS RELEASE) PARIS, 26-Feb-2023 — /EuropaWire/ — Mistral AI, a French AI startup, is proud to unveil Mistral Large, our latest breakthrough in language model technology. Boasting unparalleled reasoning capabilities, Mistral Large sets a new standard for text generation and comprehension. Available through la Plateforme and now accessible via Azure, Mistral Large marks a significant milestone in our mission to democratize cutting-edge AI.

In line with our commitment to widespread AI accessibility, Mistral is pleased to announce our collaboration with Microsoft Azure. Now, developers can seamlessly access Mistral Large through Azure AI Studio and Azure Machine Learning, unlocking powerful AI capabilities with ease. Beta customers have used it with significant success. Our models can be deployed on your environment for the most sensitive use cases with access to our model weights; Read success stories on this kind of deployment, and contact our team for further details.

Mistral Large capacities

We compare Mistral Large’s performance to the top-leading LLM models on commonly used benchmarks.

Mistral Large shows powerful reasoning capabilities. In the following figure, we report the performance of the pretrained models on standard benchmarks.

Figure 2: Performance on widespread common sense, reasoning and knowledge benchmarks of the top-leading LLM models on the market: MMLU (Measuring massive multitask language in understanding), HellaSwag (10-shot), Wino Grande (5-shot), Arc Challenge (5-shot), Arc Challenge (25-shot), TriviaQA (5-shot) and TruthfulQA.

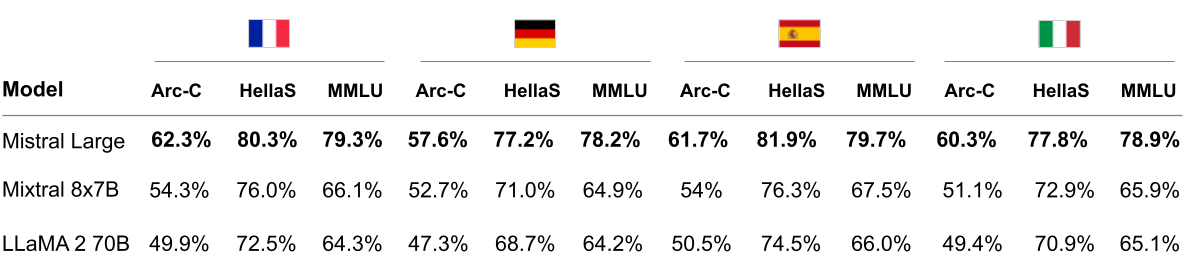

Mistral Large has native multi-lingual capacities. It strongly outperforms LLaMA 2 70B on HellaSwag, Arc Challenge and MMLU benchmarks in French, German, Spanish and Italian.

Figure 3: Comparison of Mistral Large, Mixtral 8x7B and LLaMA 2 70B on HellaSwag, Arc Challenge and MMLU in French, German, Spanish and Italian.

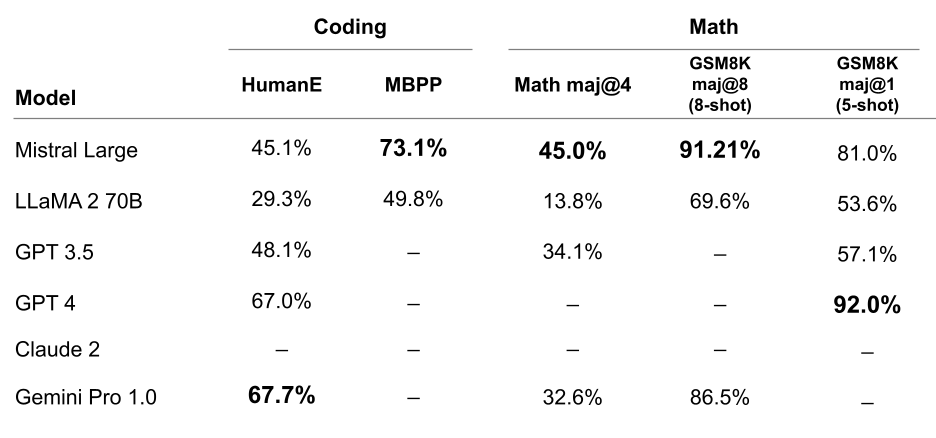

Mistral Large shows top performance in coding and math tasks. In the table below, we report the performance across a suite of popular benchmarks to evaluate the coding and math performance for some of the top-leading LLM models.

Figure 4: Performance on popular coding and math benchmarks of the leading LLM models on the market: HumanEval pass@1, MBPP pass@1, Math maj@4, GSM8K maj@8 (8-shot) and GSM8K maj@1 (5 shot).

Complementing Mistral Large, we’re also launching Mistral Small, an optimized model designed for low latency workloads. Offering superior performance and reduced latency compared to previous models, Mistral Small bridges the gap between our open-weight offerings and our flagship model.

To streamline accessibility, we’re simplifying our endpoint offerings, providing open-weight endpoints with competitive pricing alongside new optimized model endpoints. Our benchmarks offer comprehensive insights into performance and cost tradeoffs, empowering organizations to make informed decisions.

Alongside Mistral Large, we’re releasing a new optimised model, Mistral Small, optimised for latency and cost. Mistral Small outperforms Mixtral 8x7B and has lower latency, which makes it a refined intermediary solution between our open-weight offering and our flagship model.

Mistral Small benefits from the same innovation as Mistral Large regarding RAG-enablement and function calling.

Mistral Small and Mistral Large now support JSON format mode and function calling, enabling seamless integration with developers’ workflows. This functionality enhances interaction with our models, facilitating structured data extraction and complex interactions with internal systems.

Function calling lets developers interface Mistral endpoints with a set of their own tools, enabling more complex interactions with internal code, APIs or databases. You will learn more in our function calling guide.

Function calling and JSON format are only available on mistral-small and mistral-large. We will be adding formatting to all endpoints shortly, as well as enabling more fine-grained format definitions.

Mistral Large is now available on la Plateforme and Azure, with Mistral Small offering optimized performance for latency-sensitive applications. Mistral Large is also exposed on our beta assistant demonstrator, le Chat. Try Mistral today and join us in shaping the future of AI innovation. We value your feedback as we continue to push the boundaries of language model technology.

———-

First published in this link of EuropaWIRE.